TEACh: Task-Driven Embodied Agents that Chat

Aishwarya Padmakumar, Jesse Thomason, Ayush Shrivastava, Patrick Lange, Anjali Narayan-Chen, Spandana Gella, Robinson Piramuthu, Gokhan Tur, Dilek Hakkani-Tur

[AAAI-22] Main Track

Abstract:

We introduce TEACh, a dataset of over 3000 human--human, interactive dialogues to complete household tasks in simulation. A Commander with access to oracle information about a task communicates in natural language with a Follower. The Follower navigates through and interacts with the environment to complete tasks varying in complexity from "Make Coffee" to "Prepare Breakfast", asking questions and getting additional information from the Commander. Robots operating in human spaces must be able to engage in such natural language interaction with people, both understanding and executing instructions and using conversation to resolve ambiguity and recover from mistakes. We propose three benchmarks using TEACh to study embodied intelligence challenges, and we evaluate initial models' abilities in dialogue understanding, language grounding, and task execution.

Introduction Video

Sessions where this paper appears

-

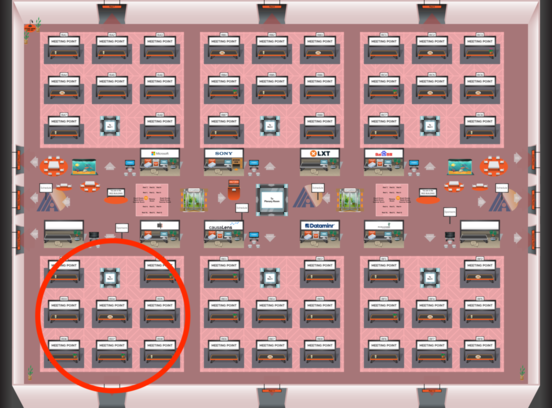

Poster Session 6

Sat, February 26 8:45 AM - 10:30 AM (+00:00)

Sat, February 26 8:45 AM - 10:30 AM (+00:00)

Red 4

Red 4

-

Poster Session 7

Sat, February 26 4:45 PM - 6:30 PM (+00:00)

Sat, February 26 4:45 PM - 6:30 PM (+00:00)

Red 4

Red 4

-

Oral Session 7

Sat, February 26 6:30 PM - 7:45 PM (+00:00)

Sat, February 26 6:30 PM - 7:45 PM (+00:00)

Red 4

Red 4